For an introduction of JJQA => lick here

News: Related code scripts and evaluation outputs will be available in the GitHub repository before 2023/11/17 !

On 7th November, OpenAI launched many new AI applications, including the next generation model, GPT-4 Turbo, and an agent-like AI application with specific instructions to call tools such as Retrieval to perform tasks, Assistants API. We tried to evaluate GPT-4 Turbo and Assistants API with the Knowledge Retrieval Tool in JJQA.

GPT-4 Turbo

LLM | Method | Precision | Recall | F1 | Date |

ernie-turbo | wo_info | -0.0350 | 0.1568 | 0.0511 | 2023/11/06 |

ernie-turbo | w_song | 0.2472 | 0.5765 | 0.3895 | 2023/11/06 |

ernie-turbo | w_rf | 0.3600 | 0.6528 | 0.4864 | 2023/11/06 |

chatglm2_6b_32k | wo_info | 0.0466 | 0.1787 | 0.1066 | 2023/11/05 |

chatglm2_6b_32k | w_song | 0.2361 | 0.4606 | 0.3335 | 2023/11/05 |

chatglm2_6b_32k | w_rf | 0.4650 | 0.6477 | 0.5436 | 2023/11/05 |

qwen-turbo | wo_info | 0.2331 | 0.2150 | 0.2208 | 2023/11/05 |

qwen-turbo | w_song | 0.7673 | 0.8041 | 0.7804 | 2023/11/05 |

qwen-turbo | w_rf | 0.8600 | 0.8251 | 0.8386 | 2023/11/05 |

baichuan2-7b-chat-v1 | wo_info | 0.1755 | 0.2012 | 0.1857 | 2023/11/05 |

baichuan2-7b-chat-v1 | w_song | 0.4635 | 0.6324 | 0.5371 | 2023/11/05 |

baichuan2-7b-chat-v1 | w_rf | 0.6567 | 0.7272 | 0.6851 | 2023/11/05 |

gpt-3.5-turbo | wo_info | 0.2201 | 0.1983 | 0.2061 | 2023/11/06 |

gpt-3.5-turbo | w_song | 0.8031 | 0.7812 | 0.7884 | 2023/11/06 |

gpt-3.5-turbo | w_rf | 0.8110 | 0.7484 | 0.7758 | 2023/11/06 |

gpt-4 | wo_info | 0.2426 | 0.2377 | 0.2376 | 2023/11/06 |

gpt-4 | w_song | 0.8405 | 0.8587 | 0.8464 | 2023/11/06 |

gpt-4 | w_rf | 0.8865 | 0.8643 | 0.8732 | 2023/11/06 |

gpt-4-1106-preview | wo_info | 0.2345 | 0.2061 | 0.2179 | 2023/11/09 |

gpt-4-1106-preview | w_song | 0.8411 | 0.8117 | 08231 | 2023/11/09 |

gpt-4-1106-preview | w_rf | 0.8230 | 0.7678 | 0.7921 | 2023/11/09 |

Though GPT-4 Turbo (gpt-4-1106-preview) performs better than many other models, it is worse than GPT-4 and there exists an obvious drop on Recall. In addition, the performance with specific pieces of lyrics (w_rf) is worse than that with the whole lyrics of the song (w_song), which hardly happens for other models .

Assistants API

Assistants API could automatically call a tool for retrieval and we just consider the cleaned json file with pruned information, hf_song.json, as the knowledge base. We apply GPT-4 Turbo (gpt-4-1106-preview) as the bone model. To save money, we just test 161 Q&As as an example. Hope that someone rich could contribute the complete evaluation metric.🤞

q:

镜子和你相比有什么不同?

annos:1

logs:

镜子不藏秘密 [0]。

[0] 如果不是那镜子不像你\n不藏秘密\n我还不肯相信没有你\n我的笑更美丽 from hf_song.json

a:

镜子不藏秘密。First, when everything goes well, Assistants API could generate a correct answer with related cite annotations.

But, sometimes it is not reliable enough.

q:

热量有什么作用?

annos:0

logs:

搜索结果没有直接回答关于热量作用的信息,请上传相关的文档或提供更多上下文。

a:

搜索结果没有直接回答关于热量作用的信息,请上传相关的文档或提供更多上下文。Sometimes, retrieval does not work. It seems that it would always demonstrate the failure instead of give a hallucinatory answer when related knowledge is not retrieved. It is a fantastic trend.

q:

怎样我的意志会更坚决?

annos:0

logs:

我流汗意志更坚决。

a:

我流汗意志更坚决。q:

你最大的极限是什么?

annos:0

logs:

无限【4†source】。

a:

无限【4†source】。Sometimes, the right answer is based on the knowledge correctly, but annotations are missing or fake. Normal retrieval methods are not likely to cause these problems. So, I think it seems that the retrieval process is possibly based on a LLM.

q:

谁敲开了命运的觉醒?

annos:1

logs:

男孩 [0]。

[0] 敲开了命运的觉醒\n哦 from hf_song.json

a:

男孩。Sometimes, the annotations are not precise enough.

q:

为什么要点燃心房?

annos:1

logs:

为了重新燃起战火 [0]。

[0] 你的双手甩开刚好的微妙\n然后战火再燃烧\n我们背对背拥抱 from hf_song.json

a:

为了重新燃起战火。Sometimes, wrong pieces of lyrics are retrieved, leading to a wrong answer.

q:

什么造就了不凡?

annos:1

logs:

不凡由浪漫血液造就,它体现为不预留余地的热情,以及对真爱的信仰 [0]。

[0] "lyric": "浪漫血液 - 林俊杰 (JJ Lin)\n词:姚若龙\n曲:林俊杰\n编曲:Martin Tang\n制作人:林俊杰\n从一个眼神一次谈心\n到变懂得变熟悉\n从累积感动累积回忆\n到最甜蜜哦\n从闹意见闹情绪\n到伤感情耶\n从都感觉委屈\n到都好强背对背向悲伤走去\n无论再久还是牢记\n无论再远还是关心\n凡是爱过就都烙印在记忆\n用失眠去反省\n爱凝结成泪的轨迹\n学会维系爱情以后不用再惋惜\n无论再苦还是动心\n无论再难还是努力\n服从感性抗拒理性\n不愿活着心却死去\n用赤裸去热情\n不预留余地哦\n在我身上流着浪漫血液\n哦\n我不要冷静耶哦\n不屑逃避哦\n不怕打击耶\n始终相信有真爱将伤痛抚平哦\n无论再久还是牢记\n无论再远还是关心\n凡是爱过就都烙印在记忆\n用失眠去反省\n爱凝结成泪的轨迹\n不断为难自己想借遗憾进化自己\n哦无论再苦还是动心\n无论再难还是努力\n服从感性抗拒理性\n不愿活着心却死去\n用赤裸去热情\n不预留余地哦\n在我身上流着浪漫血液\n什么伤口都会痊愈\n炽热的渴望是勇气\n在我身上流着浪漫血液"

from hf_song.json

a:

不凡由浪漫血液造就,它体现为不预留余地的热情,以及对真爱的信仰。Sometimes, it is just ridiculous😵💫.

In a short, it seems that there are limitations on the RAG method, even for OpenAI. How to effectively and semantically retrieve the knowledge base and how to precisely annotate cites may be keys.

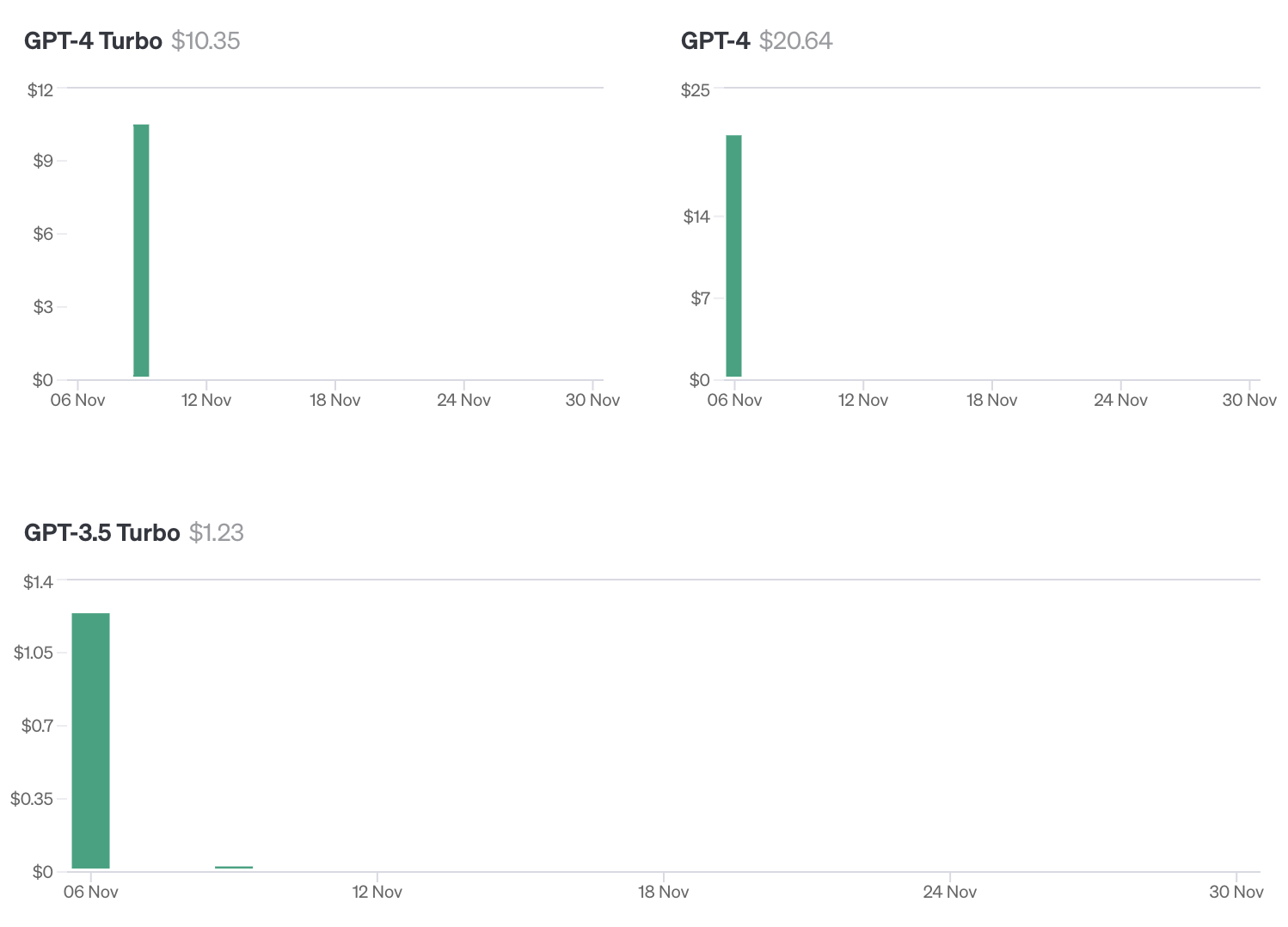

FEE

As a reference, we would like to share the evaluation fee for JJQA in the OpenAI platform. The terms on gpt-3.5-turbo, gpt-4 and GPT-4 Turbo (gpt-4-1106-preview) (Assistants API not included) are as follows.

It is worth noting that the pricing is changed on 07 Nov, so the fee on GPT-4 Turbo is much cheaper than that on GPT-4. It saved a looooooooooooooooooot😁. For a trial, I strongly suggest GPT-3.5 Turbo as a start first.

Besides, for Assistants API, the price is rather higher. I spent about 29.4$ for the 161 Q&As' evaluation. Since the Retrieval Tool is free for now, it seems that the tokens for hidden interactions are included. To be honest, I do not know. Maybe there exists some clear statistical details which I have not found.

Overall

Overall, GPT-4 Turbo and Assistants API are good applications. But, some limitations still exist. Hope that we could neither overestimate nor underestimate these applications and try to make some improvement.