SPELL: Semantic Prompt Evolution based on a LLM

https://arxiv.org/pdf/2310.01260.pdf

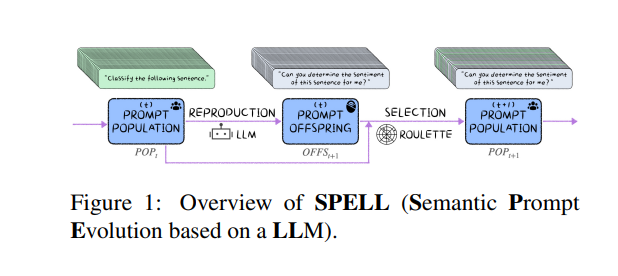

For prompt optimization, previous methods could neither globally adjust a prompt nor keep the fluency. In this paper, motivated by evolution algorithm and the powerful capacity for causally generating coherent texts based on existing contents of LLMs (Large Language Models), we consider a LLM as a causal prompt generator for reproduction and construct a black-box evolution algorithm, SPELL (Semantic Prompt Evolution based on a LLM) , to improve prompts.

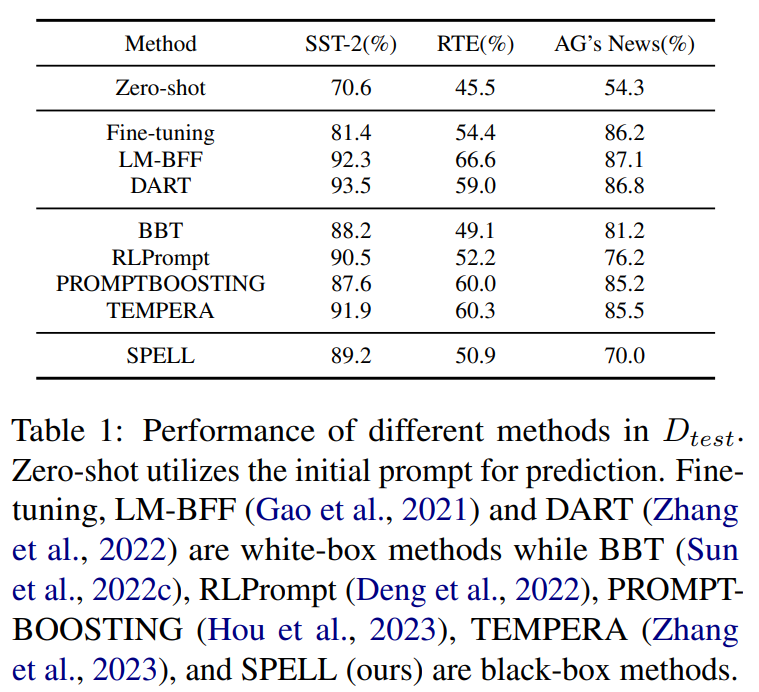

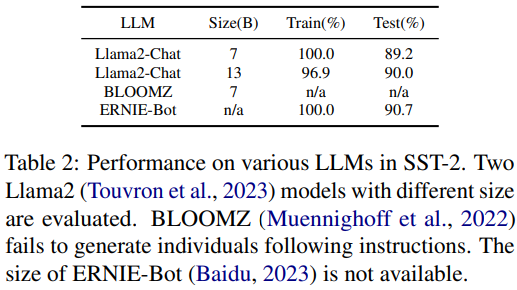

The evaluation shows that SPELL could improve the performance of prompts and has potential for prompt optimization problems.

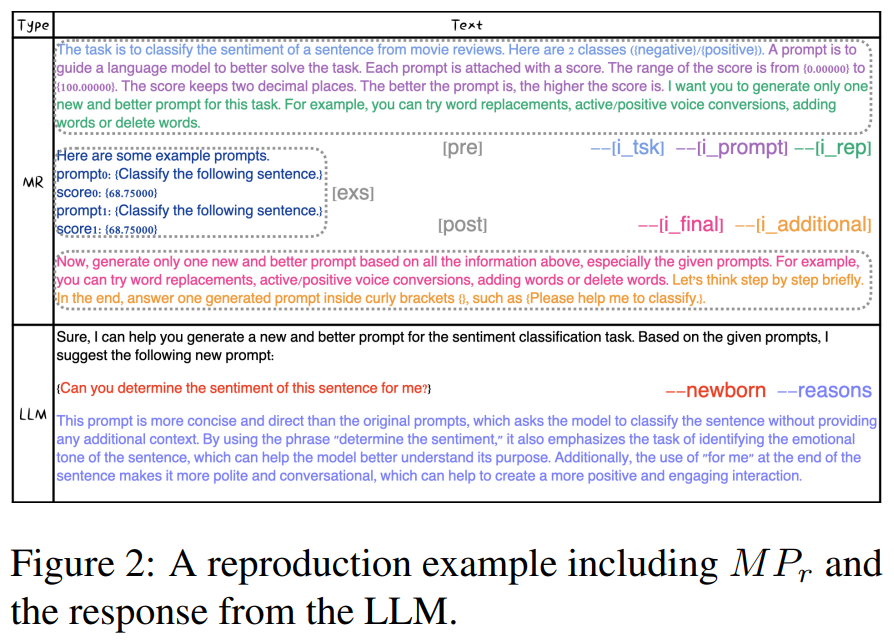

During production, we find that the LLM, based the information in the meta prompt, does generate coherent prompts in a semantically causal way since a newborn prompt is "spelled" token by token through language modeling. Moreover, the LLM would explain the reasons of revisions.

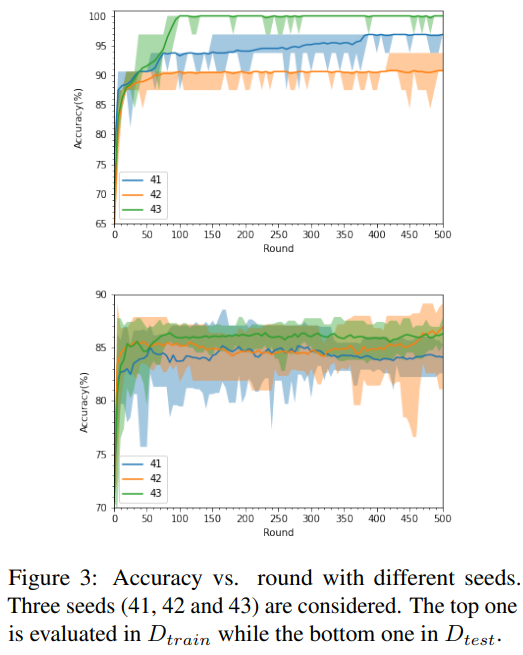

However, the optimization process demonstrates that the proposed method is not stable due to the randomness of evolution algorithm and prompt generation.

Future work may focus on adjusting a LLM to be more adaptive for semantic prompt optimization, stabilizing the optimization, and expanding semantic evolution beyond text optimization. We do believe semantic optimization is important for intelligence and LLMs are a good key for exploration.